Digital Data Collection and the Maturing of a MERL Technology

by Christopher Robert, CEO of Dobility (Survey CTO). This post was originally published on March 15, 2018, on the Survey CTO blog.

Needs, markets, and innovation combine to produce technological change. This is as true in the international development sector as it is anywhere else. And within that sector, it’s as true in the broad category of MERL (monitoring and evaluation, research, and learning) technologies as it is in the narrower sub-category of digital data collection technologies. Here, I’ll consider the recent history of digital data collection technology as an example of MERL technology maturation – and as an example, more broadly, of the importance of market structure in shaping the evolution of a technology.

My basic observation is that, as digital data collection technology has matured, the same stakeholders have been involved – but the market structure has changed their relative power and influence over time. And it has been these very changes in power and influence that have changed the cost and nature of the technology itself.

First, when it comes to digital data collection in the development context, who are the stakeholders?

- Donors. These are the primary actors who fund development work, evaluation of development policies and programs, and related research. There are mega-actors like USAID, Gates, and the UN agencies, but also many other charities, philanthropies, and public or nonprofit actors, from Catholic Charities to the U.S. Centers for Disease Control and Prevention.

- Developers. These are the designers and software engineers involved in producing technology in the space. Some are students or university faculty, some are consultants, many work full-time for nonprofits or businesses in the space. (While some work on open-source initiatives in a voluntary capacity, that seems quite uncommon in practice. The vast majority of developers working on open-source projects in the space get paid for that work.)

- Consultants and consulting agencies.These are the technologists and other specialists who help research and program teams use technology in the space. For example, they might help to set up servers and program digital survey instruments.

- Researchers. These are the folks who do the more rigorous research or impact evaluations, generally applying social-science training in public health, economics, agriculture, or other related fields.

- M&E professionals.These are the people responsible for program monitoring and evaluation. They are most often part of an implementing program team, but it’s also not uncommon to share more centralized (and specialized) M&E teams across programs or conduct outside evaluations that more fully separate some M&E activities from the implementing program team.

- IT professionals.These are the people responsible for information technology within those organizations implementing international development programs and/or carrying out MERL activities.

- Program beneficiaries. These are the end beneficiaries meant to be aided by international development policies and programs. The vast majority of MERL activities are ultimately concerned with learning about these beneficiaries.

These different stakeholders have different needs and preferences, and the market for digital data collection technologies has changed over time – privileging different stakeholders in different ways. Two distinct stages seem clear, and a third is coming into focus:

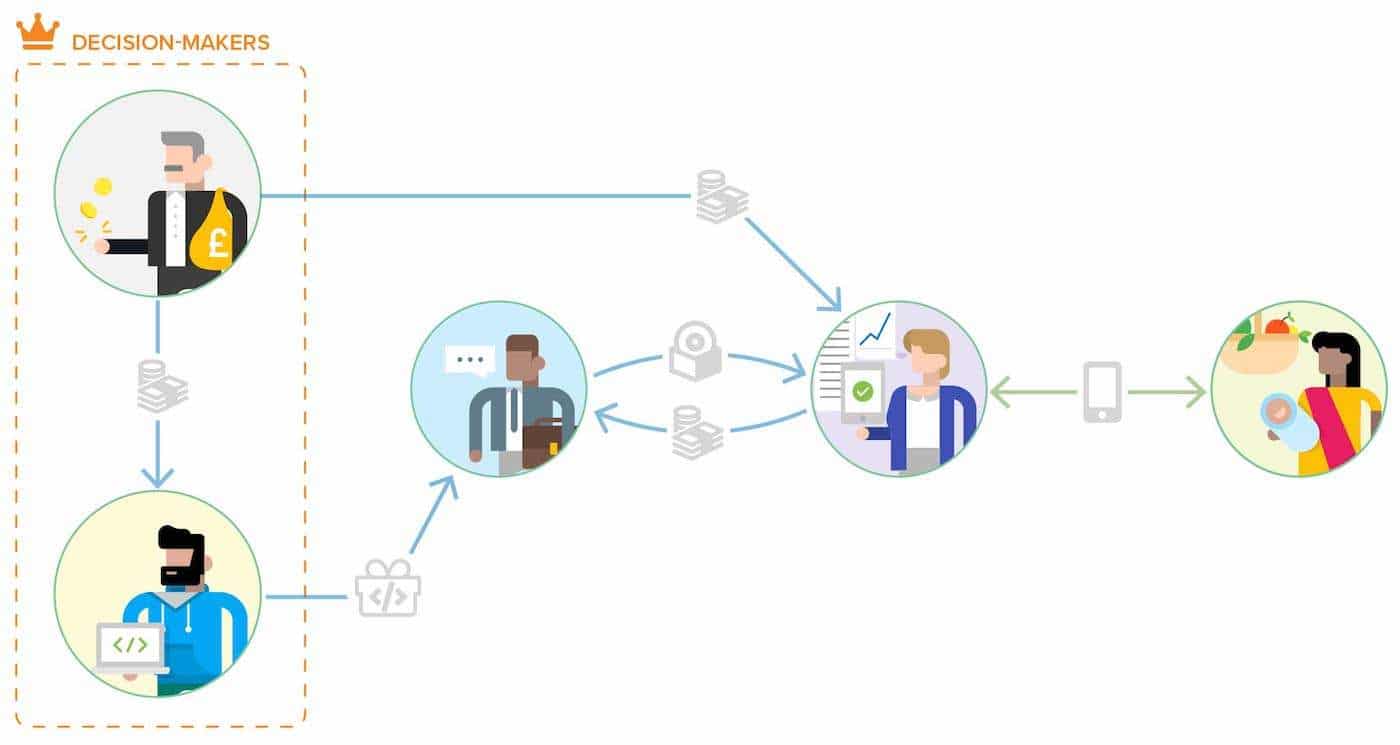

- The early days of donor-driven pilots and open source. These were the days of one-offs, building-your-own, and “pilotitis,” where donors and developers were effectively in charge and there was a costly additional layer of technical consultants between the donors/developers and the researchers and M&E professionals who had actual needs in the field. Costs were high, and some combination of donor and developer preferences reigned supreme.

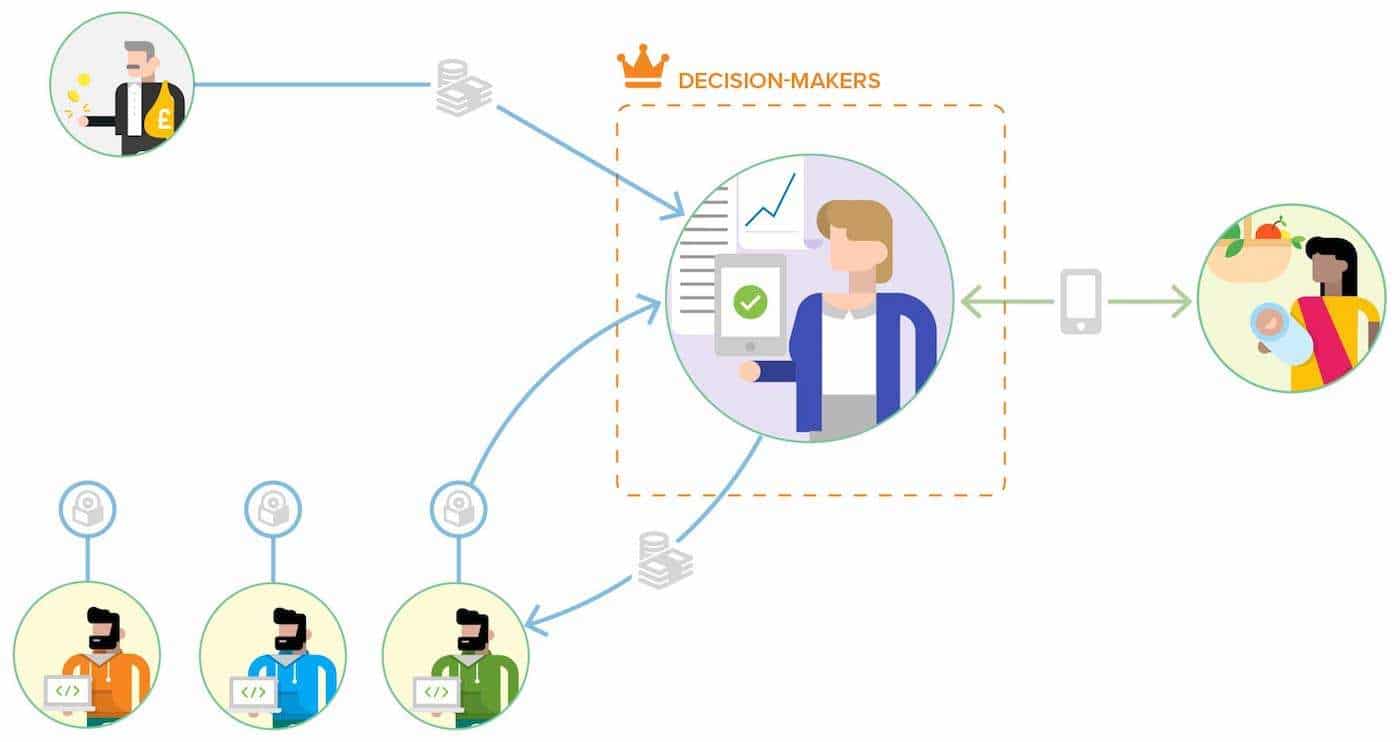

- Intensifying competition in program-adopted professional products.Over time, professional products emerged that began to directly market to – and serve – researchers and M&E professionals. Costs fell with economies of scale, and the preferences of actual users in the field suddenly started to matter in a more direct, tangible, and meaningful way.

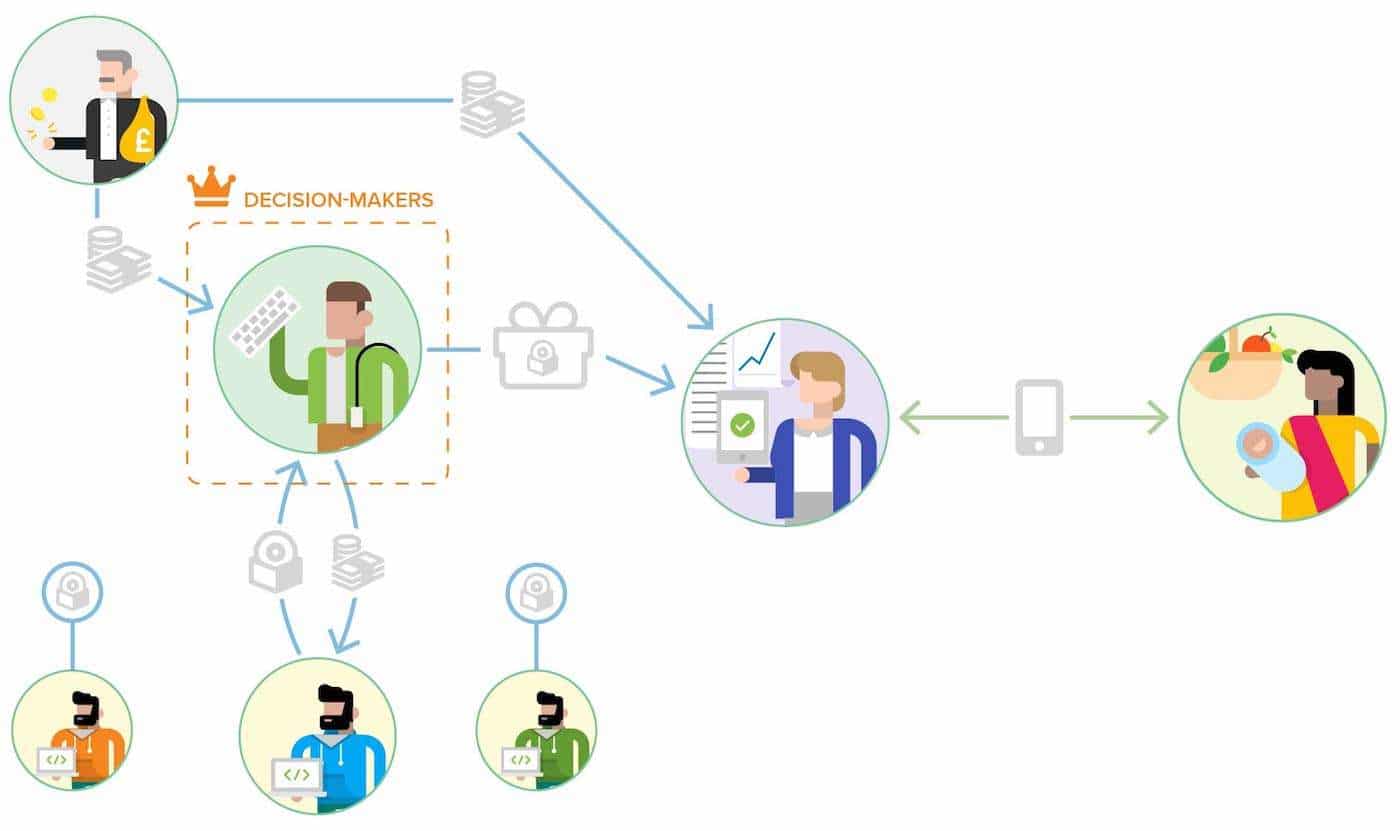

- Intensifying competition in IT-adopted professional products.Now that use of affordable, accessible, and effective data-collection technology has become ubiquitous, it’s natural for IT organizations to begin viewing it as a kind of core organizational infrastructure, to be adopted, supported, and managed by IT. This means that IT’s particular preferences and needs – like scale, standardization, integration, and compliance – start to become more central, and costs unfortunately rise.

While I still consider us to be in the glory days of the middle stage, where costs are low and end-users matter most, there are still plenty of projects and organizations living in that first stage of more costly pilots, open source projects, and one-offs. And I think that the writing’s very much on the wall when it comes to our progression toward the third stage, where IT comes to drive the space, innovation slows, and end-user needs are no longer dominant.

Full disclosure: I myself have long been a proponent of the middle phase, and I am proud that my social enterprise has been able to help graduate thousands of users from that costly first phase. So my enthusiasm for the middle phase began many years ago and in fact helped to launch Dobility.

THE EARLY DAYS OF DONOR-DRIVEN PILOTS AND OPEN SOURCE

In the beginning, there were pioneering developers, patient donors, and program or research teams all willing to take risks and invest in a better way to collect data from the field. They took cutting-edge technologies and found ways to fit them into some of the world’s most difficult, least-cutting-edge settings.

In these early days, it mattered a lot what could excite donors enough to open their checkbooks – and what would keep them excited enough to keep the checks coming. So the vital need for large and ongoing capital injections gave donors a lot of influence over what got done.

Developers also had a lot of sway. Donors couldn’t do anything without them, and they also didn’t really know how to actively manage them. If a developer said “no, that would be too hard or expensive” or even “that wouldn’t work,” what could the donor really say or do? They could cut off funding, but that kind of leverage only worked for the big stuff, the major milestones and the primary objectives. For that stuff, donors were definitely in charge. But for the hundreds or thousands of day-to-day decisions that go into any technology solution, it was the developers effectively in charge.

Actual end-users in the field – the researchers and M&E professionals who were piloting or even trying to use these solutions – might have had some solid ideas about how to guide the technology development, but they had essentially no levers of control. In practice, the solutions being built by the developers were often so technically-complex to configure and use that there was an additional layer of consultants (technical specialists) sitting between the developers and the end-users. But even if there wasn’t, the developers’ inevitable “no, sorry, that’s not feasible,” “we can’t realistically fit that into this release,” or simple silence was typically the end of the story for users in the field. What could they do?

Unfortunately, without meaning any harm, most developers react by pushing back on whatever is contrary to their own preferences (I say this as a lifelong developer myself). Something might seem like a hassle, or architecturally unclean, and so a developer will push back, say it’s a bad idea, drag their heels, even play out the clock. In the past five years of Dobility, there have been hundreds of cases where a developer has said something to the effect of “no, that’s too hard” or “that’s a bad idea” to things that have turned out to (a) take as little as an hour to actually complete and (b) provide massive amounts of benefit to end-users. There’s absolutely no malice involved, it’s just the way most of them/us are.

This stage lasted a long time – too long, in my view! – and an entire industry of technical consultants and paid open-source contributors grew up around an approach to digital data collection that didn’t quite embrace economies of scale and never quite privileged the needs or preferences of actual users in the field. Costs were high and complaints about “pilotitis” grew louder.

INTENSIFYING COMPETITION IN PROGRAM-ADOPTED PROFESSIONAL PRODUCTS

But ultimately, the protagonists of the early days succeeded in establishing and honing the core technologies, and in the process they helped to reveal just how much was common across projects of different kinds, even across sectors. Some of those protagonists also had the foresight and courage to release their technologies with the kinds of permissive open-source licenses that would allow professionalization and experimentation in service and support models. A new breed of professional products directly serving research, program, and M&E teams was born – in no small part out of a single, tremendously-successful open-source project, Open Data Kit (ODK).

These products tended to be sold directly to end-users, and were increasingly intended for those end-users to be able to use themselves, without the help of technical staff or consultants. For traditionalists of the first stage, this was a kind of heresy: it was considered gauche at best and morally wrong at worst to charge money for technology, and it was seen as some combination of impossible and naive to think that end-users could effectively deploy and manage these technologies without technical assistance.

In fact, the new class of professional products were not designed to be used entirely without assistance. But they were designed to require as little assistance as possible, and the assistance came with the product instead of being provided by a separate (and separately-compensated) internal or external team.

A particularly successful breed of products came to use a “Software as a Service” (SaaS) model that streamlined both product delivery and support, ramping up economies of scale and driving down costs in the process (like SurveyCTO). When such products offered technical support free-of-charge as part of the purchase or subscription price, there was a built-in incentive to improve the product: since tech support was so costly to deliver, improving the product such that it required less support became one of the strongest incentives driving product development. Those who adopted the SaaS model not only had to earn every dollar of revenue from end-users, but they had to keep earning that revenue month in, month out, year in, year out, in order to retain business and therefore the revenue needed to pay the bills. (Read about other SaaS benefits for M&E in this recent DevResults post.)

It would be difficult to overstate the importance of these incentives to improve the product and earn revenue from end-users. They are nothing short of transformative. Particularly once there is active competition among vendors, users are squarely in charge. They control the money, their decisions make or break vendors, and so their preferences and needs are finally at the center.

Now, in addition to the “it’s heresy to charge money or think that end-users can wield this kind of technology” complaints that used to be more common, there started to be a different kind of complaint: there are too many solutions! It’s too overwhelming, how many digital data collection solutions there are now. Some go so far as to decry the duplication of effort, to claim that the free market is inefficient or failing; they suggest that donors, consultants, or experts be put back in charge of resource allocation, to re-impose some semblance of sanity to the space.

But meanwhile, we’ve experienced a kind of golden age in terms of who can afford digital data collection technology, who can wield it effectively, and in what kinds of settings. There are a dizzying number of solutions – but most of them cater to a particular type of need, or have optimized their business model in a particular sort of way. Some, like us, rely nearly 100% on subscription revenues, others fund themselves more primarily from service provision, others are trying interesting ways to cross-subsidize from bigger, richer users so that they can offer free or low-cost options to smaller, poorer ones. We’ve overcome pilotitis, economies of scale are finally kicking in, and I think that the social benefits have been tremendous.

INTENSIFYING COMPETITION IN IT-ADOPTED PROFESSIONAL PRODUCTS

It was the success of the first stage that laid the foundation for the second stage, and so too it has been the success of the second stage that has laid the foundation for the third: precisely because digital data collection technology has become so affordable, accessible, and ubiquitous, organizations are increasingly thinking that it should be IT departments that procure and manage that technology.

Part of the motivation is the very proliferation of options that I mentioned above. While economics and the historical success of capitalism has taught us that a marketplace thriving with competition is most often a very good thing, it’s less clear that a wide variety of options is good within any single organization. At the very least, there are very good reasons to want to standardize some software and processes, so that different people and teams can more effortlessly share knowledge and collaborate, and so that there can be some economies of scale in training, support, and compliance.

Imagine if every team used its own product and file format for writing documents, for example. It would be a total disaster! The frictions across and between teams would be enormous. And as data becomes more and more core to the operations of more organizations – the way that digital documents became core many years ago – it makes sense to want to standardize and scale data systems, to streamline integrations, just for efficiency purposes.

Growing compliance needs only up the ante. The arrival of the EU’s General Data Protection Regulation (GDPR) this year, for example, raises the stakes for EU-based (or even EU-touching) organizations considerably, imposing stiff new data privacy requirements and steep penalties for violations. Coming into compliance with GDPR and other data-security regulations will be effectively impossible if IT can’t play a more active role in the procurement, configuration, and ongoing management of data systems; and it will be impractical for IT to play such a role for a vast array of constantly-shifting technologies. After all, IT will require some degree of stability and scale.

But if IT takes over digital data collection technology, what changes? Does the golden age come to an end?

Potentially. And there are certainly very good reasons to worry.

First, changing who controls the dollars – who’s in charge of procurement – threatens to entirely up-end the current regime, where end-users are directly in charge and their needs and preferences are catered to by a growing body of vendors eager to earn their business.

It starts with the procurement process itself. When IT is in charge, procurement processes are long, intensive, and tend to result in a “winner take all” contract. After all, it makes sense that IT departments would want to take their time and choose carefully; they tend to be choosing solutions for the organization as a whole (or at least for some large class of users within the organization), and they most often intend to choose a solution, invest heavily in it, and have it work for as long as possible.

This very natural and appropriate method that IT uses to procure is radically different from the method used by research, program, and M&E teams. And it creates a radically different dynamic for vendors.

Vendors first have to buy into the idea of investing heavily in these procurement processes – which some may simply choose not to do. Then they have to ask themselves, “what do these IT folks care most about?” In order to win these procurements, they need to understand the core concerns driving the purchasing decision. As in the old saying “nobody ever got fired for choosing IBM,” safety, stability, and reputation are likely to be very important. Compliance issues are likely to matter a lot too, including the vendor’s established ability to meet new and evolving standards. Integrations with corporate systems are likely to count for a lot too (e.g., integrating with internal data and identity-management systems).

Does it still matter how well the vendor meets the needs of end-users within the organization? Of course. But note the very important shift in the dynamic: vendors now have to get the IT folks to “yes” and so would be quite right to prioritize meeting their particular needs. Nobody will disagree that end-users ultimately matter, but meanwhile the focus will be on the decision-makers. The vendors that meet the decision-makers’ needs will live, the others will die. That’s simply one aspect of how a free market works.

Note also the subtle change in dynamic once a vendor wins a contract: the SaaS model where vendors had to re-earn every customer’s revenue month in, month out, is largely gone now. Even if the contract is formally structured as a subscription or has lots of exit options, the IT model for technology adoption is inherently stickier. There is a lot more lock-in in practice. Solutions are adopted, they’re invested in at large scale, and nobody wants to walk away from that investment. Innovation can easily slow, and nobody wants to repeat the pain of procurement and adoption in order to switch solutions.

And speaking of the pain of the procurement process: costs have been rising. After all, the procurement process itself is extremely costly to the vendor – especially when it loses, but even when it wins. So that’s got to get priced in somewhere. And then all of the compliance requirements, all of the integrations with corporate systems, all of that stuff’s really expensive too. What had been an inexpensive, flexible, off-the-shelf product can easily become far more expensive and far less flexible as it works itself through IT and compliance processes.

What had started out on a very positive note (“let’s standardize and scale, and comply with evolving data regulations”) has turned in a decidedly dystopian direction. It’s sounding pretty bad now, and you wouldn’t be wrong to think “wait, is this why a bunch of the products I use for work are so much more frustrating than the products I use as a consumer?” or “if Microsoft had to re-earn every user’s revenue for Excel, every month, how much better would it be?”

While I don’t think there’s anything wrong with the instinct for IT to take increasing control over digital data collection technologies, I do think that there’s plenty of reason to worry. There’s considerable risk that we lose the deep user orientation that has just been picking up momentum in the space.

WHERE WE’RE HEADED: STRIKING A BALANCE

If we don’t want to lose the benefits of a deep user orientation in this particular technology space, we will need to work pretty hard – and be awfully clever – to avoid it. People will say “oh, but IT just needs to consult research, program, and M&E teams, include them in the process,” but that’s hogwash. Or rather, it’s woefully inadequate. The natural power of those controlling resources to bend the world to their preferences and needs is just too powerful for mere consultation or inclusion to overcome.

And the thing is: what IT wants and needs is good. So the solution isn’t just “let’s not let them anywhere near this, let’s keep the end-users in charge.” No, that approach collapses under its own weight eventually, and certainly it can’t meet rising compliance requirements. It has its own weaknesses and inefficiencies.

What we need is an approach – a market structure – that allows the needs of IT and the needs of end-users both to matter to appropriate degrees.

With SurveyCTO, we’re currently in an interesting place: we’re becoming split between serving end-users and serving IT organizations. And I suppose as long as we’re split, with large parts of our revenue coming from each type of decision-maker, we remain incentivized to keep meeting everybody’s needs. But I see trouble on the horizon: the IT organizations can pay more, and more organizations are shifting in that direction… so once a large-enough proportion of our revenue starts coming from big, winner-take-all IT contracts, I fear that our incentives will be forever changed. In the language of economics, I think that we’re currently living in an unstable equilibrium. And I really want the next equilibrium to serve end-users as well as the last one!

You might also like

-

Hands on with GenAI: predictions and observations from The MERL Tech Initiative and Oxford Policy Management’s ICT4D Training Day

-

When Might We Use AI for Evaluation Purposes? A discussion with New Directions for Evaluation (NDE) authors

-

A visual guide to today’s GenAI landscape

-

Register now for the NLP-CoP Ethics and Governance Working Group Meeting on April 18th